|

This is a standard machine learning dataset from the UCI Machine Learning repository.

All of the input variables that describe each patient are numerical.This makes it easy to use directly with neural networks that expect numerical input and output values, and ideal for our first neural network in Keras. All of the input variables that describe each patient are numerical.This makes it easy to use directly with neural networks that expect numerical input and output values, and ideal for our first neural network in Keras.

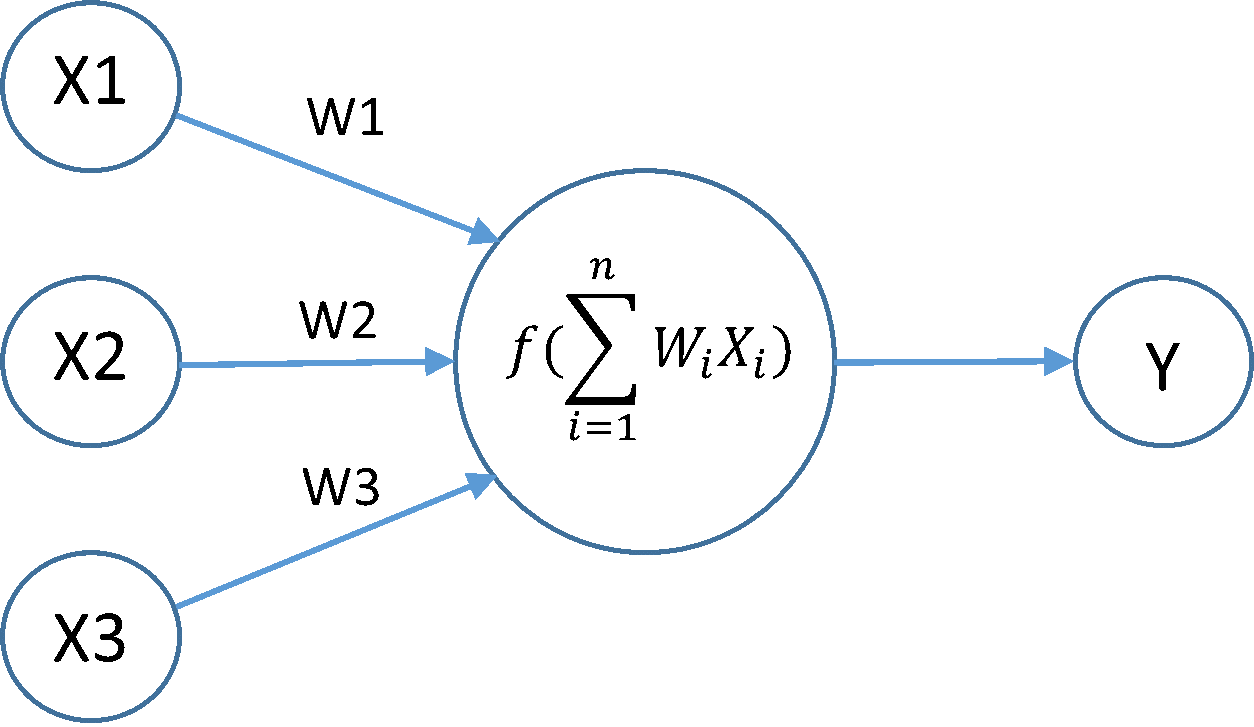

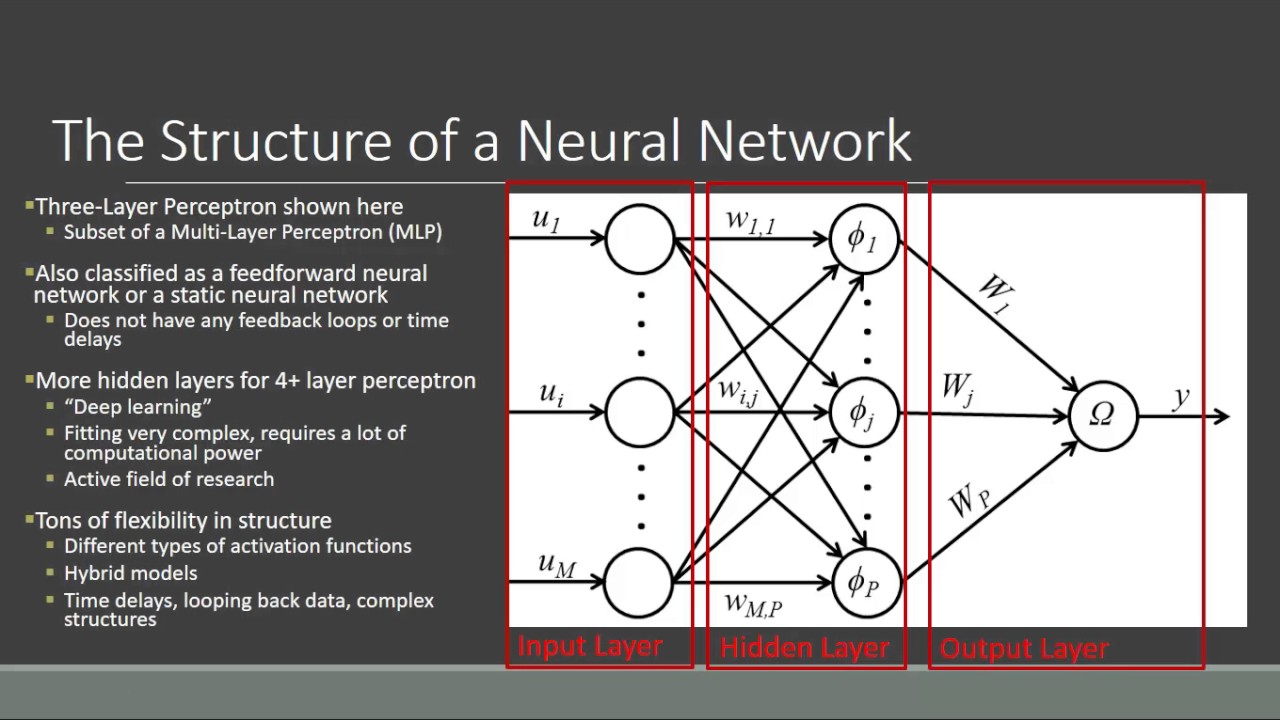

We will be learning a model to map rows of input variables (X) to an output variable (y), which we often summarize as y f(X). We can then select the output column (the 9th variable) via index 8. If this is new to you, then you can learn more about array slicing and ranges in this post. This can be specified when creating the first layer with the inputdim argument and setting it to 8 for the 8 input variables. Artificial Neural Network Tutorial Trial And ErrorThere are heuristics that we can use and often the best network structure is found through a process of trial and error experimentation ( I explain more about this here ). Generally, you need a network large enough to capture the structure of the problem. We can specify the number of neurons or nodes in the layer as the first argument, and specify the activation function using the activation argument. These days, better performance is achieved using the ReLU activation function. We use a sigmoid on the output layer to ensure our network output is between 0 and 1 and easy to map to either a probability of class 1 or snap to a hard classification of either class with a default threshold of 0.5. Artificial Neural Network Tutorial Code That AddsThis means that the line of code that adds the first Dense layer is doing 2 things, defining the input or visible layer and the first hidden layer. The backend automatically chooses the best way to represent the network for training and making predictions to run on your hardware, such as CPU or GPU or even distributed. Remember training a network means finding the best set of weights to map inputs to outputs in our dataset. This loss is for a binary classification problems and is defined in Keras as binarycrossentropy. You can learn more about choosing loss functions based on your problem here. This is a popular version of gradient descent because it automatically tunes itself and gives good results in a wide range of problems. To learn more about the Adam version of stochastic gradient descent see the post. For more on the difference between epochs and batches, see the post. We must also set the number of dataset rows that are considered before the model weights are updated within each epoch, called the batch size and set using the batchsize argument. We want to train the model enough so that it learns a good (or good enough) mapping of rows of input data to the output classification.

We have done this for simplicity, but ideally, you could separate your data into train and test datasets for training and evaluation of your model.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

- Blog

- Primavera p3 3-1

- Play beavis and butthead virtual stupidity

- Using remotejoylite

- Median xl cow level barbarian

- 7-92 mm mauser gewehr 98

- Pudhu vellai mazhai song mp3

- Blocksworld robot fda

- Adobe illustrator cs3 software download

- Europa universalis 4 res publica

- Sew what pro 64 but

- Whhat is xear audio center

- Sew what pro download

- Propresenter 7 lower thirds

RSS Feed

RSS Feed